Abstract

To fly an unmanned aircraft system (UAS), commonly referred to as a “drone,” the Federal Aviation Administration requires pilots to pass a knowledge test. There is no official requirement at the state or federal level for drone operators to demonstrate the ability to operate a UAS. The National Institute of Science and Technology (NIST) has created an exam for basic UAS flight proficiency. It created this exam for public and private entities to assess basic flight proficiency. However, NIST does not provide a scoring recommendation and leaves it to the exam user to determine the minimum criteria to pass. Hence, there is limited literature on scoring recommendations and none pertaining to institutions of higher education. This paper fills this gap by evaluating the performances of carefully selected UAS pilots who participated in the study. Their performance was divided by percentile and used to provide recommended benchmarks that community colleges can use with their flight skills assessments. This is particularly useful when the NIST exam is used for graded assessments.

Keywords: UAV, UAS, NIST, drone, proficiency

© 2023 under the terms of the J ATE Open Access Publishing Agreement

Introduction

Unmanned aerial vehicles (UAVs), commonly referred to as drones, are used in many applications. UAV refers to the drone or the aircraft itself. An unmanned aircraft system (UAS) is the total system that includes the drone, controller, and anything else that is required to keep the drone in flight. Some applications or uses for drones include delivering life-saving medical packages [1], retail deliveries [2], supporting first responders during disasters [3], inspections of critical infrastructure such as bridges and highways [4], monitoring construction projects [5], traffic monitoring [6], and land surveying [7]. As of December 2022, there were 870,389 commercial and recreational drones registered in the US [8].

Pilots are required to obtain a “remote pilot certificate” from the Federal Aviation Administration (FAA) to fly a drone for commercial purposes in the US. This knowledge test is a computerized test conducted at a third-party testing site. A practical examination is not a requirement in order to operate a drone for commercial use and presents a source of risk. Pilots may be licensed without having operated a drone. Without flight proficiency included with the license, there is no federal guidance on the minimum level of flight proficiency before pilots can operate a UAS in the national airspace. The lack of a convenient method of assessing flight proficiency creates a risk for organizations with drone pilots and the drone community as a whole [8]. This is an acute issue for two-year institutions with UAS coursework. Currently, there are no recommendations for what level of flight skills students should have upon completing an effective drone program.

This study addresses this issue by answering these two questions.

- Can a proficiency exam be created and recommended to two-year institutions?

- Can a tier level of proficiency with scoring metrics be identified to help train remote pilots?

For this study, the research institution used a sample of 52 pilots selected from a general population of drone pilots primarily working for state and government agencies. The pilots had their part 107 remote pilot certificate but did not have significant experience operating a UAS. These criteria were intentionally chosen for benchmarking because they represented the proficiency of entry-level UAS pilots.

2. Background and literature review

2.1. Drones as an emerging technology

UASs have become commonplace over the past several years. As a result of the expansion of uses and applications, drones have penetrated a wide range of industries. This growing trend of services and popularity has raised many concerns about drone safety and effectiveness and a growing demand for means to assess pilot competence. As organizations increase their use of UAS technology, it has become incumbent that two-year institutions incorporate this technology into their curriculum.

The FAA considers a UAS and a crewed plane both “aircraft”; however, it treats their licensure differently. It is essential to understand the knowledge requirements for obtaining a manned aircraft license and the required practical assessment. It is important to understand the gap between the extensive practical requirements for a manned aircraft license and the absence of a practical examination requirement for a UAS license. Manned aircraft pilots must demonstrate extensively that they can fly, whereas drones do not have any flight proficiency standards.

To obtain a Title 14 Code of Federal Regulations Part 61 manned aircraft certification, the FAA requires 40 hours of flight time with an instructor and additional flight time solo. After the pilots have completed ground school testing, they can partner with an examiner to complete the practical exam. Even if a pilot receives a private license, it only licenses them to fly that specific type of manned aircraft.

2.2. Advanced Technological Education (ATE)

The National Science Foundation’s (NSF) ATE program was created to support two-year higher education institutions and help fund the education of technicians for high-technology fields [9]. The ATE program supports the education of these technicians, who are critical to economic development and national security. Congress mandated ATE as one of the first congressionally endorsed programs at the NSF in 1992 [10]. The ATE program invites research proposals that advance the body of knowledge concerning technical education. For this paper, we will expand on ATE’s support of technical education with respect to UASs.

Since 2008, ATE has awarded 30 grants to support the development of technicians in the UAS industry, ranging from geospatial data systems to agricultural development, workforce planning, and other autonomous technologies [11]. Figure 1 shows an increase in the number of awards, primarily starting in 2015 and reaching a peak in 2019 at six awards per year. The COVID-19 pandemic most likely significantly impacted the number of awards granted in 2020 and 2021; however, there has been a rebound in 2022, with three awards within the first four months of the year. Monitoring the number of awards by ATE supports this research and shows the importance of two-year institutions educating drone pilots.

2.3. Two-year institutions supporting this growing industry

Community colleges across the US are developing drone programs to support these growing industries, and ATE has played a large part in funding these initiatives. Since 2008, ATE has awarded funding to 21 different colleges. The Old Dominion University Research Foundation and Northland Community & Technical College have received the most awards to date, with three awards each. For this research, monitoring these awards shows the emphasis these institutions and the NSF place on the UAS industry and the importance of education with respect to the UAS industry.

2.4. What areas are funded by these institutions?

For this research, each award provided by ATE was categorized into five major groups: Geospatial, Drone Workforce, Agri-Drone, Autonomous Tech, and Drone Sensors. As shown in Figure 2, Geospatial is the leading category based on the relevant geospatial data acquisition, analysis, and exploitation with the use of UASs. Other geospatial-related topics focus on creating student opportunities within this technical workforce. The geospatial industry is being fueled by the increasing number of drones being manufactured, registered, and capable of building new ways of applying geospatial technologies.

2.5. Why does the industry need two-year institutions to have UAS training programs?

In the US, pilots flying a drone for anything other than recreational purposes must earn a Part 107 remote pilot certificate from the FAA. To earn their remote pilot certificate, pilots must take a computerized knowledge test at an FAA-approved testing site. Pilots must also register their drone with the FAA online using the FAA drone zone website before operating their drone commercially. Currently, there is no practical examination to identify flight proficiency, as there is with a manned aircraft license. This creates a potential risk for commercial operators with respect to safety, skill assessment, and risk management if it is not addressed. Owing to the FAA not requiring a minimum level of flight proficiency, employers, organizations, and higher education institutions must develop standards for what constitutes an acceptable level of flight proficiency.

2.6. National Institute of Standards and Testing (NIST)

NIST was founded in 1901 as part of the US Department of Commerce [12]. NIST’s mission is to promote innovation and competitiveness by advancing science, standards, and technology in ways that enhance security and improve quality of life [12]. Next, NIST develops the measures and means to quantitatively evaluate robotic systems and UAS pilot proficiency [12].

NIST has created low-cost and simple test methods for UASs that assist in objectively measuring and comparing system capabilities and remote pilot proficiency. Because NIST does not train or certify pilots, it leaves this final task to other standards development organizations, such as the Airborne Public Safety Association (APSA) and National Fire Protection Association, to determine which metrics are appropriate for individuals and organizations to adopt.

In the following sections, examples of the Open Test Lane and Basic Proficiency Evaluation for Remote Pilots (BPERP) are explained with the understanding that NIST recommends no scoring metrics and that this final step is left up to the administrators to determine on their own what an acceptable passing rate is.

2.7. NIST Open Test Lane

The NIST Open Test Lane includes five different maneuvers, as shown in Figure 3. Five bucket stands are constructed in such a way that image targets are created at the bottom of each bucket. The pilots must perform these maneuvers while a proctor calls out different instructions. The image targets must be captured clearly, and the drone should be positioned an “S” distance from the bucket. The distance “S” is a predetermined variable that the proctor can set before the test begins. For example, the “S” distance could be set to 10, 20, or 30 feet from the buckets to be awarded a point.

Maneuver 1: The position test requires the pilot to capture images while moving forward and backward along a center lane. Maneuver 2: The traverse requires pilots to rotate in a pattern around the bucket stands 1, 2, and 3. Maneuver 3: The orbit test requires a rotation around bucket stand 3 clockwise and counterclockwise. Maneuver 4: The spiral test calls for the pilot to fly around all four bucket stands in order, alternating from clockwise to counterclockwise. Maneuver 5: The recon test requires an in-line flight from the launch pad to the last bucket stand.

2.8. NIST Basic Proficiency Evaluation for Remote Pilots (BPERP)

Created by NIST, the BPERP is a combination of the first two maneuvers from the Open Test Lane described in Section 2.7. NIST does not define a scoring or timing criteria, but it can be given in 10 minutes using three bucket stands, a 50-foot tape measure, and a stopwatch. The BPERP takes up an area of 50 feet by 20 feet and can easily be taken indoors or outdoors [13]. The tests are carried administers with a visual observer present. The pilot flies the flight paths in alignment with the buckets. Each alignment requires the pilot to capture a single image of a green ring inside the buckets. Because NIST does not certify pilots or recommend scoring metrics, there have been other nationally recognized and reputable organizations, such as APSA, that can provide a flight certification. APSA recommends that the remote pilot capture 32 out of the 40 image alignments with accurate landings within the designated 10-minute time limit to pass the BPERP exam.

APSA is a nonprofit organization founded in 1968 that supports and encourages the use of aircraft for public safety. APSA’s goal is to support, promote, and advance the safe and effective use of manned and unmanned aircraft by governmental agencies in support of public safety operations through training, networking, advocacy, and educational programs [14]. Currently, it has over 3,000 members at both the international and local levels. APSA provides networking systems, educational seminars, and product expositions that members find invaluable [14].

3. Methodology

3.1 Data collection

Three NIST Open Test Lanes were constructed at a soccer field on the research institution’s campus. The soccer field was ideal for the study due to the area being spacious and level. The experiment was given on a sunny day with a light breeze. Each lane was assigned an administrator to help with flight instructions, record the times, and provide any safety precautions. Each pilot was assigned to a test lane, and an additional pilot would record the exam with a secondary drone positioned at an altitude of 250 feet. The research institution provided either a DJI Mavic [15] or a DJI Phantom [16] drone to complete the test. The two drones used in the study have similar characteristics, with any differences considered negligible.

The pilots that participated in the study were Part 107 pilots that predominately worked for state and local government agencies. Most had minimal flight experience but were considered competent to fly a drone by their agency. Considering that the participants were novice pilots, the sample would be comparable to students at two-year institutions.

Once the pilots completed the five maneuvers, they were directed to proceed to a research lab nearby to perform the test using a recently developed simulator. The simulator was programmed to represent the test conditions closely. The pilots were given instructions through the simulator, just as during the outdoor test. Instructions were provided on the simulator screen in addition to the auditory instructions. The script mirrored the text provided on the NIST scoring sheet that was used with the outdoor open lane test. The controller had a standard configuration as the drone controllers. Participants captured images using a button on the controller similar to the actual drone controller. The camera could be pitched up or down with the roll button on the left side of the controller. Once the image was taken, a “camera click” could be heard before the next instruction began. Specific weather conditions could be set, but a clear day with no wind was set for this experiment. These conditions were used in the outdoor open-lane examinations. The research administrators used laptops with appropriate RAM to run the software necessary. Lab assistants were available to assist with logistics, but the simulator was entirely self-administered.

The research institution identified the correlation of specific maneuvers and combinations of maneuvers to measure the strength of the relationship to the overall NIST Open Lane Exam. Using the adjusted R-squared method provided a more accurate understanding of each maneuver or combination of maneuvers by comparing each of them to the overall 5 maneuver NIST Open Lane Exam. RStudio was used to build the multi-linear regression model using a built-in data set using the participant’s flight data.

Percentiles were used to determine each maneuver’s scoring requirement and flight time requirement and the different groups of maneuvers. In addition, using percentiles for the various maneuvers would help describe how a score compares to other scores from the same set. This would help build the different levels of proficiency and group pilots based on their flight accuracy and expertise.

4. Results

The first objective was to determine how well the BPERP predicts flight performance compared with the more elaborate open lane suite. The next objective was to understand which of the five maneuvers (individually) or which combination of maneuvers would best estimate the average of all five of the NIST Open Test Lane maneuvers. Finally, we would identify an appropriate pass rate based on the sample of novice practitioners that were examined.

4.1 Statistical goodness of fit

A statistical “goodness of fit” analysis from the 90-flight test was conducted. Understanding how each of the five NIST Open Test Lane maneuvers fits the overall five-maneuver (dependent variable) data set would aid in determining which of the five maneuvers instructors could choose from, given a priority list. Providing instructors with a shorter exam saves time, space, and financial resources. In addition, just as importantly, it has a near-equivalent assessment value as compared to the overall NIST Open Test Lane. Each of the five maneuvers has two criteria that should be met: score and time. A proctor reviews the score to determine if the images of the targets meet the scoring criteria as a pass or fail. Next, and more importantly, is the time elapsed during the completion of the maneuver.

Table 1 explains how well each maneuver represented the overall five-maneuver NIST Open Test Lane exam with respect to flight time. We used the score from the overall five-maneuver Open Test Lane exams as the dependent variable. The adjusted R-values indicate the strength of a linear relationship among the overall five maneuver exams and either a single exam or a grouping of exams. Two two-year institutions may choose an individual maneuver or a smaller subset of maneuvers if they have limited time, space, or financial resources. This will assist them in deciding which exam to choose.

Table 1. Adjusted R-values for flight times of individual mans and combinations of mans

4.2 Why is time more important?

When analyzing the data from BPERP exams, the study found that 93% of participants passed the image scoring portion of the test, but only 48% met the time requirement. Further, nearly 44% of all flights recorded passed the image scoring portion of the exam with a perfect score. This led the researchers to believe that meeting the time requirement is much more difficult than meeting the image scoring requirement. So, for this research, more weight and significance are placed on the time requirement than the scoring requirement.

4.3 Flight time ranked individually

When evaluating the pilot’s time, the research indicated that each maneuver has a close goodness of fit compared to all five maneuvers from the Open Test Lane exam. The researchers then determined how closely correlated the current BPERP test was to the overall five-maneuver Open Test Lane after the maneuvers were prioritized or listed in a way that showed the goodness of fit for each maneuver. Finally, because NIST had already established the BPERP, the researchers focused on this test to determine if another maneuver or combination of maneuvers should be used instead of the BPERP.

After examining the adjusted R-values for flight times, the current BPERP (Man1 + Man2) indicates a good fit to times from the entire open lane suite of maneuvers. Table 2 shows a high adjusted R-value of .9213 for the participants’ times compared to the overall NIST Open Test Lane exam. This study identifies the BPERP as a good predictor of flight proficiency compared to the full open lane suite based on the adjusted R-values shown in Table 2. This is important to help validate, based on this research, that the BPERP can be used to create not only a basic proficiency exam but also a multitiered flight proficiency exam with various levels that pilots can achieve.

Table 2. Times for BPERP-adjusted R-value (combination of Man1 + Man2)

5. Discussion

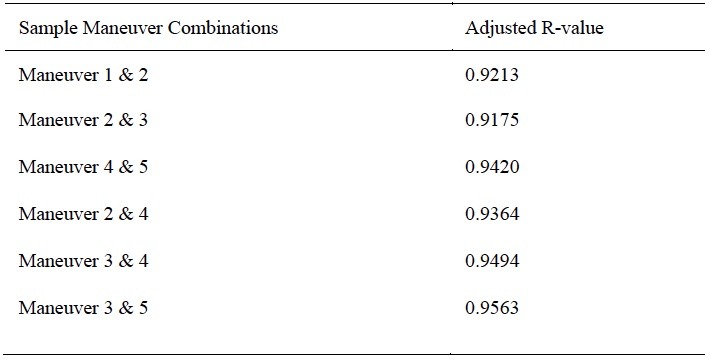

The following flight combinations in Table 3 represent a diverse range of flight patterns, maneuvers, and difficulties. Because there are five different flight maneuvers, this would result in 12 different combinations of flight maneuvers. Still, the researchers selected these based on the criteria of patterns, maneuvers, and difficulty as mentioned before.

Table 3. Adjusted R-values for flight times across a sample of maneuver combinations

Institutions can use the following tables (4 and 5) to create a customized training or testing course outline. Using the adjusted R-value for each maneuver for scoring and/or flight times, either using individual or different combinations of maneuvers can be helpful.

Table 4. Recommended testing outline for image scoring

Table 5. Recommended testing outline for flight times

Table 6 breaks down the percentiles and the respective flight times associated with each percentile. When comparing the APSA’s current BPERP test, the research shows this test fits in the 50th percentile and helps establish this as a basic or beginner level of proficiency.

Table 6. Percentile of times for establishing different proficiency levels

To provide instructors at two-year institutions with a benchmark for flight proficiency, the researchers established the proficiency level and grade recommendation shown in Table 7. The grade recommendation is based on the 10% percentile tiers of the sample data. The times have been rounded for ease of use in the field or classroom setting.

Table 7. Recommended proficiency levels using the BPERP as the basis

6. Conclusion

For instructors at two-year institutions looking to establish their UAS training criteria, the complete NIST Open Test Lane exam may be their initial starting place. However, before doing so, it is necessary to consider that the full five-maneuver open lanes can be time-consuming to build and set up and require additional space beyond what is required with the BPERP.

The good news is that other options available are statistically similar in predicting flight performance and can be used to establish a reputable training program with fewer resources. Going back to the five maneuvers that constitute the NIST Open Test Lane, the researchers present different combinations of maneuvers that can be quicker to set up, are less expensive, and require less space to administer. For example, the first three maneuvers only require three bucket stands, only take up roughly 50 feet in length and 20 feet in width, and can be administered in approximately 10 minutes as opposed to including maneuvers four and five, which require a total of 100 feet in length and require more time and resources.

In conclusion, the flight times for our sample participants have been broken into percentiles to establish a proficiency scoring method. The top 10% of pilots completed the BPERP test in five minutes and nine seconds or less. Continuing with building these levels of proficiency required breaking the dataset into four additional parts and by percentile of the pilots’ flying times. This is intended to give instructors at two-year institutions the ability to create different levels of pilot proficiency.

Acknowledgments

Disclosures. The authors declare no conflicts of interest.

1. Z. Ghelichi, M. Gentili, and P. B. Mirchandani, “Logistics for a fleet of drones for medical item delivery: a case study for Louisville, KY,” Computers & Operations Research 135, 105443 (2021). https://doi.org/10.1016/j.cor.2021.105443 (accessed Feb. 7, 2023).

2. B. Alkouz, B. Shahzaad, and A. Bouguettaya, “Service-based drone delivery,” in 2021 IEEE 7th International Conference on Collaboration and Internet Computing (CIC) (2021). https://doi.org/10.1109/cic52973.2021.00019 [4] “ISO – About us.” https://www.iso.org/about-us.html (accessed Feb. 7, 2023).

3. Federal Aviation Administration, “Unmanned aircraft systems (UAS),” https://www.faa.gov/uas.

4. Y. Li, M. M. Karim, and R. Qin, “A virtual-reality-based training and assessment system for bridge inspectors with an assistant drone,” IEEE Transactions on Human-Machine Systems 52(4), 591–601 (2022).

5. C. Wankmüller, M. Kunovjanek, and S. Mayrgündter, “Drones in emergency response – evidence from cross-border, multi-disciplinary usability tests,” International Journal of Disaster Risk Reduction 65, 102567 (2021).

6. A. Kumar, R. Krishnamurthi, A. Nayyar, A. K. Luhach, M. S. Khan, and A. Singh, “A novel software-defined drone network (SDDN)-based collision avoidance strategies for on-road traffic monitoring and management,” Vehicular Communications 28, 100313 (2021).

7. J. Burgett, B. Lytle, D. Bausman, S. Shaffer, and E. Stuckey, “Accuracy of drone-based surveys: structured evaluation of a UAS-based land survey,” Journal of Infrastructure Systems 27(2) (2021).

8. C. Dees and J. Burgett, “Using flight simulation as a convenient method for UAS flight assessment for contractors,” The Professional Constructor 47(1) (2022).

9. National Science Foundation, “Advanced technological education (ATE),” https://beta.nsf.gov/funding/opportunities/advanced-technological-education-ate.

10. National Science Foundation, https://www.youtube.com/watch?v=GJSBszibvqc.

11. Advanced Technological Education Program brings two-year colleges and industry together to educate new workforce. NSF. (n.d.). Retrieved January 23, 2023, from https://www.nsf.gov/news/news_summ.jsp?cntn_id=108192

12. National Institute of Standards and Technology, “About NIST,” https://www.nist.gov/about-nist.

13. A. Frazier, “A standard proficiency test for small drone pilots,” Vertical Magazine (2020, October 27).

14. APSA, « Mission / vision / values, » https://publicsafetyaviation.org/about-alea/mission-vision-values.

15. Mavic 2 – product information – DJI. DJI Official. (n.d.). Retrieved February 7, 2023, from https://www.dji.com/mavic-2/info

16. Phantom 4 Pro V2.0 – specifications – DJI. DJI Official. (n.d.). Retrieved February 7, 2023, from https://www.dji.com/phantom-4-pro-v2/specs